Why CubeSat testing needs a process rethink

The era of one-off test campaigns is ending. Here’s what comes next.

Something fundamental changed in the satellite supply chain over the last decade — but only half the industry noticed.

Launch costs fell from over $100,000 per kilogram to well under $2,000. Rideshare became routine. CubeSat platforms matured from university experiments into commercial products. The barriers that once made space inaccessible to smaller players collapsed, one by one.

The result: more spacecraft, shorter cycles, and faster iteration than anyone in traditional aerospace was built to handle.

But there’s a part of the pipeline that didn’t evolve at the same speed. And it’s increasingly becoming the bottleneck.

The bottleneck no one is talking about loudly enough

When launch capacity scales, the constraint shifts upstream. Today, the question isn’t whether you can get your CubeSat to orbit — it’s whether you can qualify it fast enough to make your launch window.

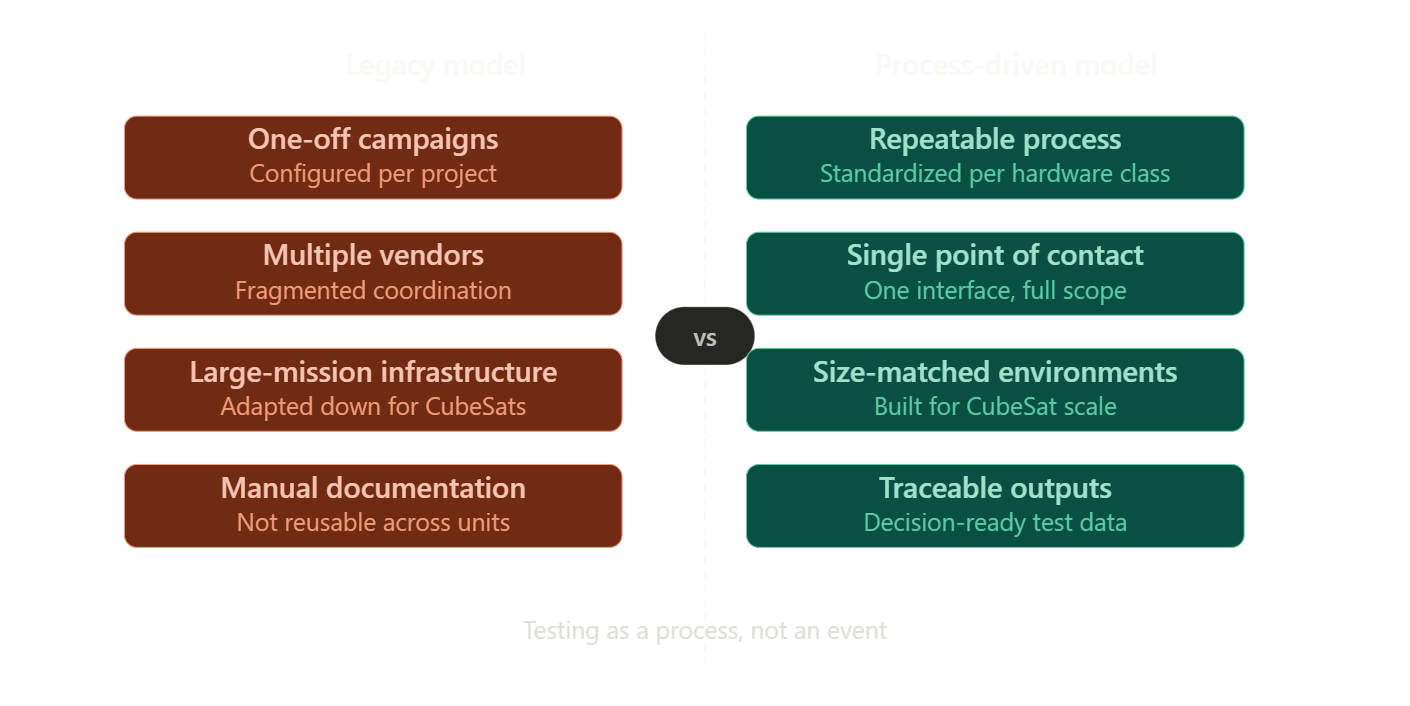

Hardware development timelines have compressed. Production is increasingly serial. But the verification and qualification processes many teams rely on still reflect a world where every satellite was a bespoke project, managed by a dedicated team, on a multi-year timeline.

Manual setups. Project-specific documentation stacks. Test infrastructure calibrated for large, infrequent missions rather than small, frequent ones. Long lead times for slot availability. Coordination spread across multiple labs for different test types.

That’s not a CubeSat-era workflow. That’s legacy aerospace in a NewSpace supply chain.

What „one-off“ actually costs you

The traditional test campaign model treats every test as its own event. You configure, you document, you execute, you compile — then you start again for the next item. It works. But it doesn’t scale.

For a team building one satellite every two years, that’s acceptable overhead. For a team building constellations, or spinning through hardware revisions on six-month cycles, it’s a serious drag.

The costs are rarely visible on a single campaign. They accumulate in: rescheduling loops when test slots don’t align with development milestones, documentation overhead that isn’t reusable across units, lack of comparative data across iterations, and test infrastructure that wasn’t sized for the hardware it’s now being asked to verify.

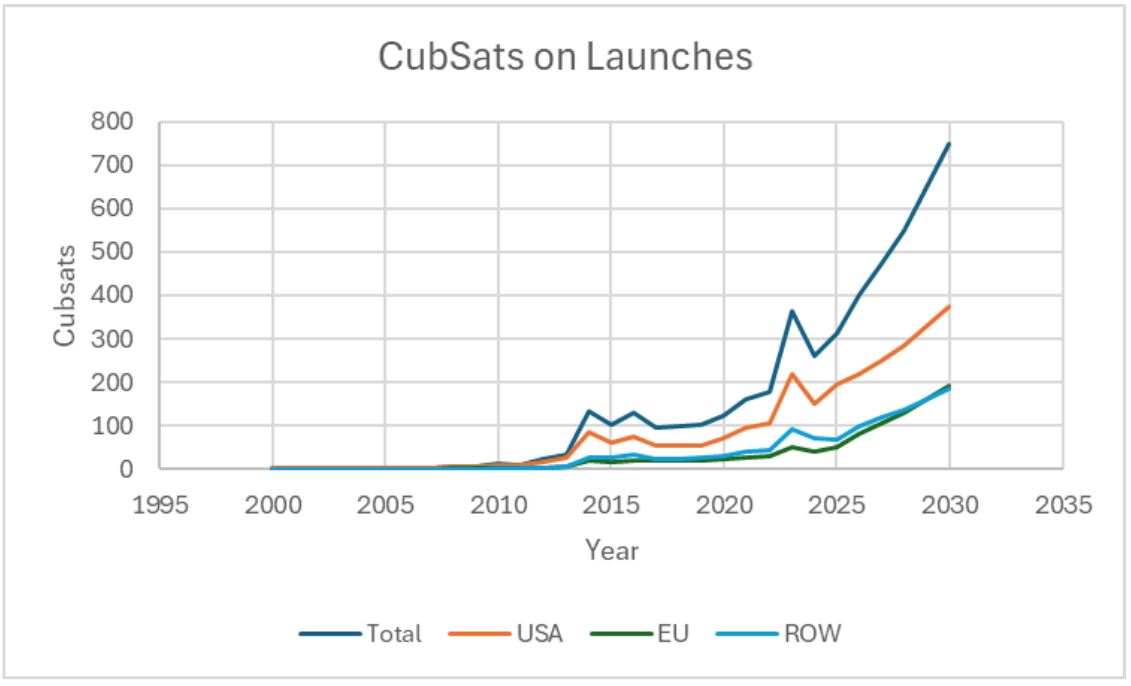

The CubeSat market grew at roughly 20% CAGR between 2020 and 2024. The testing infrastructure serving it did not.

Testing as a process, not an event

The shift that’s needed isn’t about adding more test capacity — it’s about rethinking how verification is structured.

A process-driven model starts from a different premise: that test execution should be repeatable, traceable, and size-matched by design. Not configured from scratch each time.

In practice, this means:

Standardized environments. Test setups built around hardware classes — not adapted to each unit individually. If your hardware is a 3U CubeSat, it should slot into a standardized setup, not require a custom configuration.

Single-point coordination. One contact, one interface for requirements intake, scheduling, execution, and data delivery. Not a multi-vendor coordination problem.

Lean execution. 5S-driven lab organization, structured workflows, minimal handoffs. The kind of operational discipline that manufacturing adopted decades ago — applied to verification.

Decision-ready outputs. Test results that support go/no-go engineering decisions directly, not documentation packages that need significant processing before they’re useful.

This isn’t a radical reimagination. It’s applying process thinking that works in other high-throughput technical fields — and has been conspicuously absent from space hardware verification.

What this looks like at CubeSat scale

The hardware scope matters here. A CubeSat testing process doesn’t need to solve every aerospace qualification scenario — it needs to solve the specific scenarios that come up repeatedly at 1U to 16U scale.

Vibration. Thermal vacuum. Pyroshock. EMC/emissions. Each of these has a defined envelope for CubeSat-class hardware. Building infrastructure that’s purpose-sized for that envelope — rather than adapting large-mission facilities downward — changes both throughput and cost structure.

It also changes the data profile. When the same test type runs repeatedly on comparable hardware, you accumulate reference data. You start to understand what failure modes look like at this scale, at this component class, in this flight environment. That context is valuable to developers — and it doesn’t exist in a one-off model.

The supply chain gap is real — and it’s opening wider

The supply chain gap is real — and it’s opening wider

CubeSat launch volumes will continue growing. The economics driving the NewSpace transition aren’t reversing. Which means the pressure on verification infrastructure will increase, not decrease.

The organizations that recognize this now — and build testing capability that reflects how small satellite development actually works — will be the ones their customers depend on when schedules tighten and launch windows don’t move.

New Space needs new testing. Not the same old model with a smaller price tag.

Bavarian Space Lab by enveon is being built in Munich as a dedicated CubeSat and small satellite verification facility — structured, process-driven, and sized for NewSpace development cycles. We’re currently scheduling early test slots and building relationships with teams who want to align early.